The future of LLMs are about edge computing, ubiquitous deployment, and deep personalization. That calls for democratization of the LLM technology, and it can’t go without the ReAct paradigm.

The cost must drop. A key technique is Retrieval-Augmented Generation (RAG), which personalizes LLMs without a costly training process (“fine-tuning”).

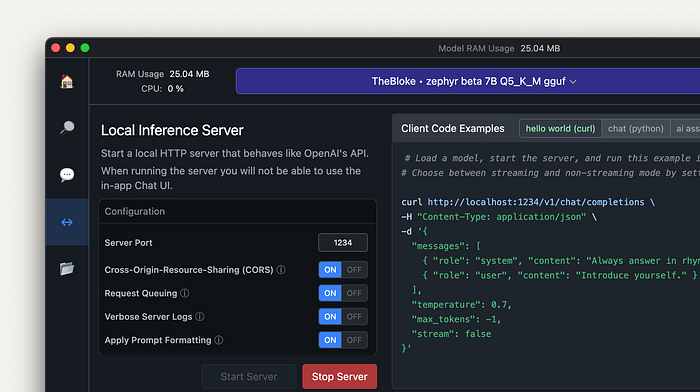

Self-hosting LLMs

Large language models (LLMs) know a lot, but just nothing about you (unless you’re famous, in which case please subscribe — except if you’re fbi.gov/wanted kind of famous). That’s because they are trained on public datasets, and your private information are (hopefully) not public.

We are talking about sensitive data here: bank statements, personal journals, browser history, etc. Call me paranoid, but I don’t want to send any of these over internet to hosted LLMs in the cloud, even if I paid a hefty amount to keep them good confidants. I want to run LLMs locally.

Many LLMs are freely available, so that you can host them offline. To name a few: Llama, Alpaca, Sentret, Mistral, Jynx, and Zephyr. In fact, there are too many options for you to keep track of ‘em all — Proof: Two of them are Pokémons, and you didn’t even notice.

Making LLMs know you

To make a LLM relevant to you, your intuition might be to fine-tune it with your data, but:

- Training a LLM is expensive.

- Due to the cost to train, it’s hard to update a LLM with latest information.

- Observability is lacking. When you ask a LLM a question, it’s not obvious how the LLM arrived at its answer.

There’s a different approach: Retrieval-Augmented Generation (RAG). Instead of asking LLM to generate an answer immediately, frameworks like LlamaIndex:

- retrieves information from your data sources first,

- adds it to your question as context, and

- asks the LLM to answer based on the enriched prompt.

RAG overcomes all three weaknesses of the fine-tuning approach:

- There’s no training involved, so it’s cheap.

- Data is fetched only when you ask for them, so it’s always up to date.

- The framework can show you the retrieved documents, so it’s more trustworthy.

RAG imposes little restriction on how you use LLMs. You can still use LLMs as auto-complete, chatbots, semi-autonomous agents, and more. It only makes LLMs more relevant to you.

(I first wrote the elevator pitch above on the LlamaIndex documentation. I hope they don’t mind me double-posting it here.)

(Well, yes, its intake of your data is limited by its context window now. It can be tough to have it to talk and act like you, if that’s what you’re striving for.)

RAG is battle-tested. The Microsoft-OpenAI duo is way ahead in this game. Enterprise-wise, Azure has added a feature called GPT-RAG. On the consumer side, Bing Chat is a textbook example of a good RAG implementation, so is GPT-4 with its function-calling capabilities.

LLMs with RAG are Agents

Function-calling deserves an honorable mention here, though it is way out of scope for an article on RAG.

In RAG, the action of retrieval must be executed somehow. Thus, it is a function. Functions do not have to be pure (in the mathematical sense); that is, they can have side effects (and — in the programming world — they often do). Therefore, functions are just tools that a LLM could wield in its hands. Metaphorically, we call those LLMs with tool-using abilities “agents”.

GPT-4 isn’t the only model that can call functions; “ordinary” LLMs can be made agents via a paradigm called “ReAct”. Put simply, rather than asking the LLM to answer your question (or respond to your demand) right away, it:

- introduces the LLM to a list of tools that it can use,

- asks which tool (and how) the LLM would like to use for getting the information it needs to confidently answer your question (or for executing the task that meets your demand),

- runs the corresponding function,

- feeds back the result of the tool execution to the LLM as if it’s YOU had wielded the tool, observed the result, and described what you saw to the LLM,

- asks if the LLM has got all what it needs to complete the query, and

- if so, asks it to respond to your ask, in the end; else, go back to step 2.

ReAct democratizes agency for LLMs. Using a purely semantic approach, the ReAct paradigm enables models that aren’t designed to interface programs to do so. It creates vast possibilities for those who self-host large language models.

Summary

RAG and ReAct are two (nested) concepts that are the future of democratized AI technology. My confidence is strong in the growth in open-source projects like LlamaIndex. If you also believe in a future of ubiquitous, edge-computed LLMs, join us on this journey.